How Reinforcement Learning Optimizes Inventory Management

Reinforcement Learning (RL) is reshaping how businesses manage inventory by enabling smarter, real-time decision-making. Unlike older methods that rely on fixed rules, RL uses data to continuously learn and improve restocking strategies. Here's what you need to know:

- What RL Does: RL systems make decisions based on inventory levels, customer demand, and cost factors, aiming to minimize holding costs and prevent stockouts.

- Why It Matters: Fixed-rule systems struggle with unpredictable demand and complex supply chains. RL adapts dynamically, ensuring better performance across various scenarios.

- Key Benefits:

- Improved demand forecasting without relying on rigid assumptions.

- Real-time adjustments to inventory levels for better efficiency.

- Lower costs by balancing stock levels and avoiding waste.

Big players like Amazon and UPS are already leveraging RL to optimize their supply chains. Combining RL with advanced algorithms like Deep Q Networks or Proximal Policy Optimization has shown measurable gains, including reduced costs and improved service levels. While implementation requires careful planning, the potential for cost savings and operational improvements is clear.

Can Deep Reinforcement Learning Improve Inventory Management?, Joren Gijsbrechts

sbb-itb-eafa320

Benefits of Using Reinforcement Learning for Inventory Optimization

Reinforcement Learning vs Traditional Inventory Management: Cost Impact Comparison

Better Demand Forecasting Accuracy

Reinforcement Learning (RL) models excel at demand forecasting by learning directly from raw data, eliminating the need for predefined assumptions or distributions. This approach allows RL to process historical sales data, market trends, and external factors simultaneously, resulting in more precise demand predictions. Additionally, RL algorithms can quickly adapt to seasonal shifts and sudden market changes.

Big names like UPS and Amazon have already integrated RL algorithms into their inventory systems to create smarter strategies and improve efficiency. For instance, a case study from 2022 to 2025 involving a retailer selling Coke showed that a Deep Q Network outperformed traditional (s, S) policies. It achieved this by dynamically adjusting order quantities based on factors like the day of the week and current inventory levels.

Data scientist Sebastian Sarasti highlights this shift:

"Traditional methods rely on linear models... Real-life scenarios don't follow a linear pattern... although linear models can help reduce overstocking and understocking, they can be improved with reinforcement learning."

Real-Time Inventory Adjustments

RL doesn't just improve forecasting - it also enables real-time inventory adjustments. Unlike static methods that rely on fixed reorder points, RL supports dynamic decision-making. It continuously monitors stock levels, customer demand, and supply chain variables, making real-time adjustments that eliminate the delays common with traditional forecasting models.

As Yulia Fedorova from numi.digital explains:

"Reinforcement learning offers a more adaptive approach by continuously learning from real-time data."

This capability is especially beneficial for e-commerce businesses managing thousands of SKUs across multiple fulfillment centers. RL agents analyze multiple data streams simultaneously, ensuring smarter and faster ordering decisions.

Lower Holding Costs and Fewer Stockouts

Beyond better forecasting and real-time adjustments, RL helps businesses cut costs. It strikes a balance between minimizing storage expenses and avoiding lost sales. By maintaining only the stock that's truly necessary, RL reduces warehousing costs while predicting demand spikes to ensure products are available when needed.

Proximal Policy Optimization (PPO) algorithms have been shown to deliver higher profits across diverse supply chains compared to traditional heuristics. Studies using real-world retail data confirm that Deep RL outperforms older models, such as Economic Order Quantity, in areas like inventory turnover and service levels. RL systems achieve this by using reward functions that account for holding costs, stockout penalties, and ordering fees - creating a framework that traditional methods simply can't match.

Here's how RL optimizes key cost categories:

| Cost Category | Impact of Reinforcement Learning | Traditional Static Policy Limitation |

|---|---|---|

| Holding Costs | Reduces excess inventory by maintaining only necessary stock | Relies on "safety stock" buffers, leading to higher costs |

| Stockout Costs | Predicts demand surges to prevent shortages | Struggles with unexpected demand spikes |

| Ordering Costs | Balances order frequency and quantity for efficiency | Fixed schedules often cause inefficiencies |

| Profitability | Maximized through adaptive, real-time decisions | Limited by inability to respond to market changes |

This combination of precision, adaptability, and cost efficiency showcases how RL is transforming inventory management.

Core RL Techniques for Inventory Management

Now that we've outlined the advantages of reinforcement learning (RL), let's dive into the techniques that make it so effective for inventory management. Each method brings its own approach to solving inventory challenges, offering adaptable solutions for different supply chain scenarios.

Q-Learning for Restocking Decisions

Q-learning is a model-free RL method that helps managers develop optimal restocking strategies without requiring prior knowledge of demand patterns or cost structures. It works by estimating "Q-factors" (q(x, a)), which predict the long-term value of ordering a specific quantity when the inventory is at a certain level.

Unlike static reorder systems with fixed thresholds, Q-learning dynamically adjusts both the timing and size of orders based on real-time data. The process involves the agent observing current inventory, placing an order, receiving a reward adjusted for costs, and updating its strategy. Simulations show that Q-learning achieves noticeable improvements in inventory performance after 1,000,000 steps and approaches near-optimal results by 5,000,000 steps. Interestingly, it often converges to an "S-s" inventory policy, where large orders are triggered only when stock dips below a critical level. Research suggests that Q-learning outperforms traditional "order-up-to" systems, especially in cases where demand is relatively low compared to warehouse capacity.

| Component | Inventory Management Definition |

|---|---|

| State | Current inventory, including on-hand stock and items on order |

| Action | Quantity of units to order |

| Reward | Revenue minus costs (holding, ordering, and penalties for stockouts) |

| Policy | The decision rule for order quantities based on inventory levels |

While Q-learning focuses on reacting to real-time data, model-based RL offers a proactive way to plan inventory strategies.

Model-Based RL for Demand Simulation

Model-based RL takes a different route by creating a virtual model of the inventory system. This allows agents to simulate various scenarios and apply "what-if" strategies before making actual decisions. As IIETA describes:

"Model-based RL imbues the agents with the ability to visualize alternative situations, apply the what-if approach, and assess consequences, all in a virtual space, before executing any action in the real world."

This method is highly efficient with data. Systems like Dyna-Q combine real observations with simulated experiences, maximizing the utility of limited real-world data. Additionally, model-based RL can mimic complex dynamics, such as fluctuating demand, supplier behavior, and unexpected disruptions. By integrating real-time inputs from ERP and warehouse management systems, the model continuously evolves with market conditions, helping the AI agent predict outcomes more accurately and reduce uncertainty.

Deep RL for Complex Supply Chains

When managing thousands of SKUs across multiple locations, traditional Q-learning may fall short. Deep Reinforcement Learning (DRL) steps in to handle these complexities by using neural networks to process large-scale state and action spaces. This makes it especially useful for e-commerce operations, where inventory decisions depend on a wide range of variables.

Algorithms like Asynchronous Advantage Actor-Critic (A3C) are known for their speed in processing supply chain tasks, while Proximal Policy Optimization (PPO) excels in adapting to different warehouse setups by maintaining stability during learning updates. Research has shown that PPO often achieves better average profits and adapts more effectively to diverse supply chain configurations than simpler methods.

For example, a DRL model powered by CNNs (Convolutional Neural Networks) trained on four years of data achieved impressive results: a 3.2% demand forecast error, a 22% reduction in inventory costs (saving around $2,030), and a 2.1% out-of-stock rate. These systems can extract meaningful patterns from historical demand data, improving forecasting accuracy even in volatile markets. Moreover, DRL can balance competing goals - like maximizing service levels while keeping holding and logistics costs low - within a single framework. For operations spanning multiple locations, Multi-Agent Reinforcement Learning (MARL) enables decentralized decision-making based on local data, making it an effective tool for multi-echelon supply chains.

How to Implement RL in Your Inventory Management System

Reinforcement Learning (RL) can transform inventory management, but implementing it requires a clear and structured approach. Building on the techniques and benefits discussed earlier, here’s how to put RL into action for your system.

Define States, Actions, and Rewards

At the heart of any RL system are three key elements: states, actions, and rewards. These define what the RL agent observes, the decisions it can make, and the goals it aims to achieve.

- States: These should provide a complete view of your inventory environment. Include details like on-hand stock, on-order inventory, and even temporal factors like the day of the week to help the agent identify patterns, such as seasonal demand shifts. For example, tracking on-order inventory prevents overstocking by accounting for shipments already on their way.

- Actions: These represent the choices your agent can make, such as how much stock to reorder. You can define actions as discrete quantities (e.g., 0 to maximum warehouse capacity) or as multiples of your Minimum Order Quantity (MOQ).

-

Rewards: The reward system drives the agent toward your business objectives. Many models calculate profit for each period as:

(Sales Revenue) - (Holding Costs) - (Fixed Ordering Costs) - (Variable Unit Costs). Alternatively, you could focus on minimizing costs by penalizing stockouts and excessive inventory. As Peyman Kor explains:"The reward is the cost associated with both holding inventory and missing out on sales".

| Component | Implementation Considerations | Impact on Deployment |

|---|---|---|

| State | On-hand stock, On-order stock, Day of week, Lead time | Defines decision context and model complexity |

| Action | Order quantity, Multiples of MOQ, "No order" | Must align with warehouse constraints |

| Reward | Sales revenue, Holding costs, Stockout penalties | Shapes optimization objectives |

To encourage early exploration, use optimistic initialization by setting initial Q-values higher than expected rewards. Additionally, penalize the agent when inventory falls below a safety stock level, ensuring high customer service levels.

Train RL Models Using Simulated and Historical Data

Relying only on historical data can lead to poor results when demand patterns change. The solution? Domain randomization. By adding random noise to historical demand data during training, you expose the RL agent to a broader range of scenarios, making it more adaptable to real-world changes.

For example, a DQN policy trained with domain randomization achieved an average profit of $21,887.77, compared to $20,163.84 for a policy trained solely on historical data. Even when demand increased slightly beyond historical norms, the domain-randomized policy outperformed, maintaining a profit of $26,432.61 versus $25,077.61. Guangrui Xie highlights the value of this approach:

"The core idea of domain randomization is by randomizing the physical parameters of the simulated environment... the RL model will experience situations more like the real environment, hence the learned policies will generalize better".

Key training strategies include:

- Simulate diverse scenarios: Use domain randomization to expand the training dataset with varied demand patterns.

- Epsilon-greedy exploration: Introduce randomness during training to help the agent discover better strategies. Gradually reduce randomness (epsilon) as the model stabilizes.

- Simplify complex supply chains: For large networks, train RL agents on smaller sub-networks first to avoid overwhelming the model with too many variables.

A thorough training process ensures your RL model is robust and ready for deployment.

Deploy and Monitor RL Policies

Deploying RL in a live environment can be challenging, so careful testing is essential. Compare your RL policy to a baseline "order-up-to" policy in a simulated setting to ensure it delivers better results - whether through cost savings or increased profits. For complex systems, limit order quantities to specific increments (e.g., multiples of 5 or 10) to simplify decision-making and reduce computational demands.

Once live, continuous retraining is critical. Regularly update the model using recent demand data to adapt to market changes. Balance reward metrics to ensure the system prioritizes both service levels (avoiding stockouts) and cost control (minimizing excess inventory). Since RL decisions can sometimes lack transparency, maintain detailed logs of inventory levels and orders to validate the system’s performance. As Yulia Fedorova notes:

"RL decisions are often less transparent than rule-based methods, making them harder to justify in some business settings".

For added reliability, consider a hybrid approach. Combine traditional forecasting methods with RL-based adjustments to achieve both accuracy and adaptability. This blend leverages the strengths of proven forecasting techniques while taking advantage of RL’s dynamic optimization capabilities.

Combining RL with 3PL Logistics Services

Once your reinforcement learning (RL) system is up and running, actively monitoring inventory decisions, the next logical step is to team up with a logistics partner capable of executing those decisions with speed and precision. This is where third-party logistics (3PL) providers come into play. They provide the infrastructure necessary to turn RL-driven insights into actionable fulfillment strategies.

When RL is paired with 3PL expertise, its ability to evaluate costs, locations, and availability in real-time extends across the entire distribution network - not just individual warehouses. For example, when disruptions like delayed shipments or sudden demand spikes occur, RL models can dynamically reroute orders to more reliable distribution centers. Yulia Fedorova sums it up perfectly:

"RL optimizes supplier and warehouse selection based on real-time constraints (cost, location, availability)".

The real magic happens when your RL system accounts for in-transit inventory and fluctuating lead times in its decision-making process. This level of integration allows RL to coordinate with 3PL transportation schedules, avoiding unnecessary reordering and ensuring smooth operations.

Optimizing Distribution and Fulfillment

For RL-driven inventory decisions to truly shine, they need to be tightly integrated with 3PL operations. In a multi-echelon supply chain - where a central hub serves multiple regional retailers - RL can optimize the entire flow of goods, not just individual locations. This means balancing inventory across all nodes to minimize costs while maintaining high service levels.

A six-month simulation provides a glimpse into RL's potential: using the Soft Actor-Critic algorithm, an RL-based planner delivered a 90% boost in operating profit and a 6% increase in revenue compared to a heuristic-based approach. These gains were achieved by configuring the RL model to factor in distance-based fulfillment costs, ensuring 3PL resources were deployed cost-effectively.

To reach this level of optimization, it's crucial to define multi-objective rewards that consider factors like fulfillment costs, holding costs, and penalties for stockouts (e.g., "loss of goodwill"). When your 3PL partner provides real-time shipment visibility, the RL model can make smarter decisions about order timing and quantities. By extending RL's adaptive decision-making into the logistics network, you lay the groundwork for leveraging 3PL infrastructures - such as those offered by JIT Transportation - to operationalize RL strategies.

Using JIT Transportation's Scalable Infrastructure

To fully capitalize on RL's capabilities, partnering with a specialized provider like JIT Transportation is key. Their nationwide network and advanced technology offer the foundation RL models need to perform at their best. Scalable 3PL systems provide real-time data - such as ASNs, EDI/API updates, and carrier milestones - that feed directly into RL agents' decision-making processes. Ashley Taylor, a product manager at Cleverence, describes it this way:

"JIT is essentially a signal-to-execution conversion engine".

This seamless data integration enables RL systems to skip deep storage and move goods directly from inbound to outbound through cross-docking and flow-through operations. By reducing the amount of inventory sitting idle, JIT Transportation not only lowers holding costs but also improves response times to shifting demand. Their Warehouse Management Systems (WMS) and Transportation Management Systems (TMS) ensure that RL-driven actions - like dynamic reorder points - are executed efficiently through tools like RF scanning and automated tasking.

Before deploying RL with a 3PL partner, make sure your master data (such as SKUs, pack sizes, and units of measure) is standardized to avoid costly errors like rush fees or stockouts. Start small with a pilot program, testing RL-driven inventory optimization on a specific product line or logistics lane, before scaling up to the entire network. To prevent issues during the learning phase, implement guardrails like capping inventory targets or limiting action frequency, which can help avoid erratic behavior or "reward hacking".

Conclusion

Reinforcement learning (RL) is changing the way inventory management works by moving away from rigid, rule-based systems to decisions shaped by dynamic, real-time data. Traditional methods, which depend on fixed reorder points, often falter when faced with unpredictable demand. RL, on the other hand, allows systems to learn optimal ordering strategies directly from data, adapting seamlessly to demand shifts, supply chain disruptions, and seasonal trends - all without relying on predefined mathematical models.

As Sridhar Subramanian, Smita Mahajan, and Shrikrishna Kolhar put it:

"Using RL would allow organizations to develop the niche of self-regulatory decision-making since computer-based learning would consider dynamic data and feedback as the base".

Advanced RL algorithms like PPO and A3C have demonstrated their ability to rival or even surpass traditional heuristics. They also bring the flexibility needed to manage complex, multi-level supply chains. Industry leaders such as UPS and Amazon have already embraced RL to streamline and enhance their supply chain operations.

However, the success of RL in inventory management depends on more than just algorithms. Execution is key. Even the most advanced RL models need the support of a dependable third-party logistics (3PL) partner to make their insights actionable. This includes ensuring access to real-time data, maintaining consistent lead times, and having the infrastructure to translate RL-driven insights into tangible warehouse operations and shipments.

This guide has outlined how RL can revolutionize inventory management, but the transformation is incomplete without the right 3PL partner. Start small by piloting RL on a specific product line, using both simulated and historical data. Make sure your reward functions cover all key costs - like holding, shipping, and stockouts. Finally, partner with a 3PL provider like JIT Transportation to bring your RL strategies to life. By applying RL techniques effectively, you can reduce stockouts and stay competitive in today’s fast-moving e-commerce landscape.

FAQs

What data do I need to start using RL for inventory decisions?

Reinforcement learning (RL) can be a powerful tool for managing inventory decisions, but it relies on having the right data. Some of the key inputs include historical sales data to understand demand trends, real-time inventory levels, lead times, and details about supply chain conditions. This information allows the RL model to evaluate the current situation, test different actions, and improve its decision-making policies over time. Adding IoT data into the mix can make the process even better by offering real-time, dynamic updates that help fine-tune decisions.

How do I design a reward function that avoids stockouts without overstocking?

To create a reward function that helps avoid stockouts and overstocking, you need to strike a balance between inventory costs and service levels. Here's how it works:

- Use negative rewards to penalize stockouts, such as unmet demand, and for holding excess inventory, like storage costs.

- Assign positive rewards for successfully meeting demand without running out of stock.

The key is to adjust the penalty weights based on your specific operational priorities. This fine-tuning ensures you maintain optimal inventory levels while keeping costs as low as possible.

How can RL integrate with a 3PL like JIT Transportation for faster fulfillment?

Reinforcement learning (RL) works hand-in-hand with 3PL providers like JIT Transportation by leveraging real-time data to streamline inventory and supply chain operations. RL models can predict key factors such as order quantities, routing, and scheduling. This allows JIT to quickly adapt to fluctuating demand, cut down lead times, and speed up order fulfillment. By using this dynamic method, businesses can respond more effectively, reduce delays, and keep their supply chains running smoothly.

Related Blog Posts

Related Articles

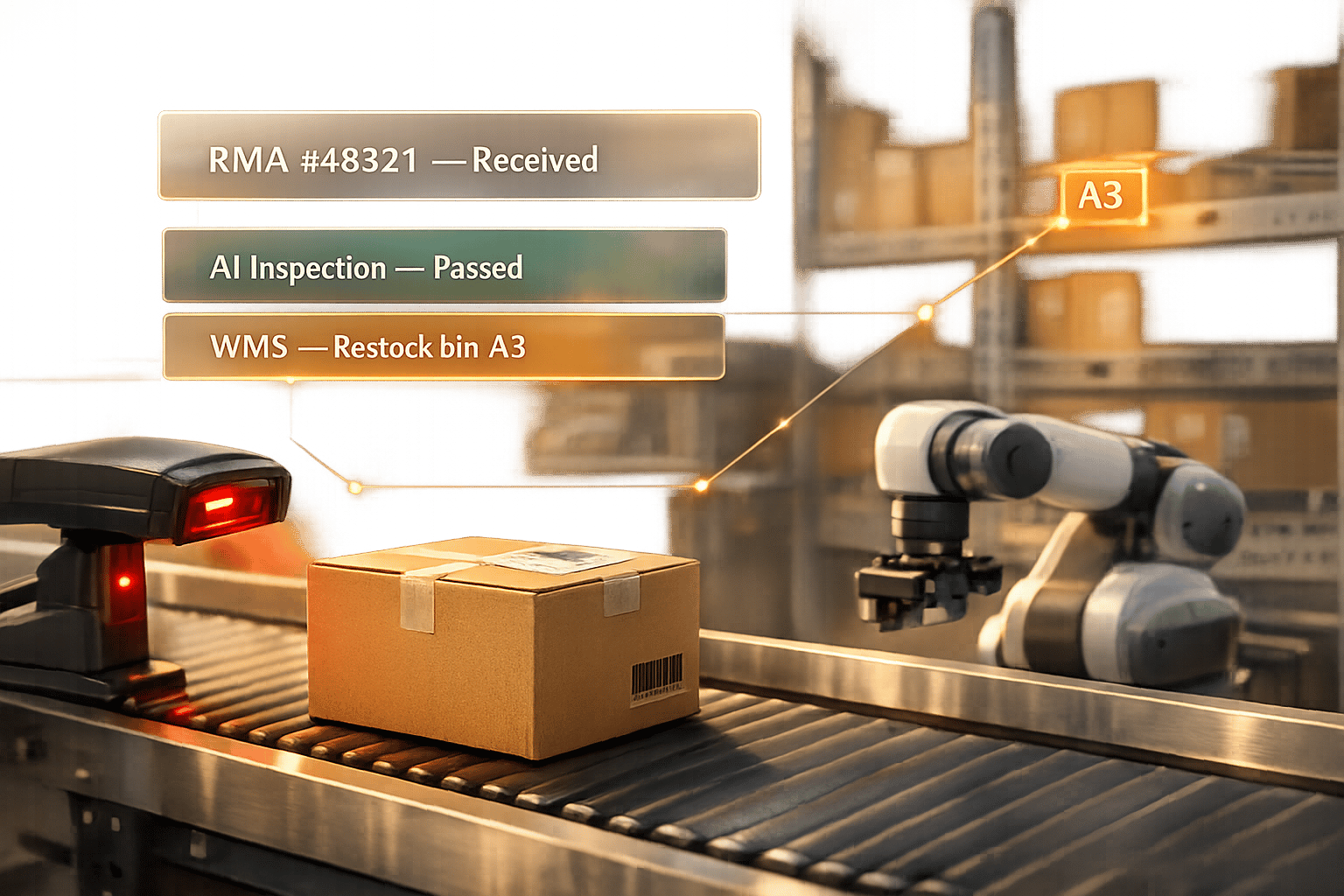

How 3PLs Use Automation for Returns Management

GDPR Compliance for AI Forecasting in Logistics